Intelligence, Built by Humans

Artificial Intelligence isn't magic. It’s a technology that allows computers to learn from experience, adjust to new inputs, and perform human-like tasks.

More Than Just Chatbots

AI is no longer just an experiment. It is the infrastructure of our modern world.

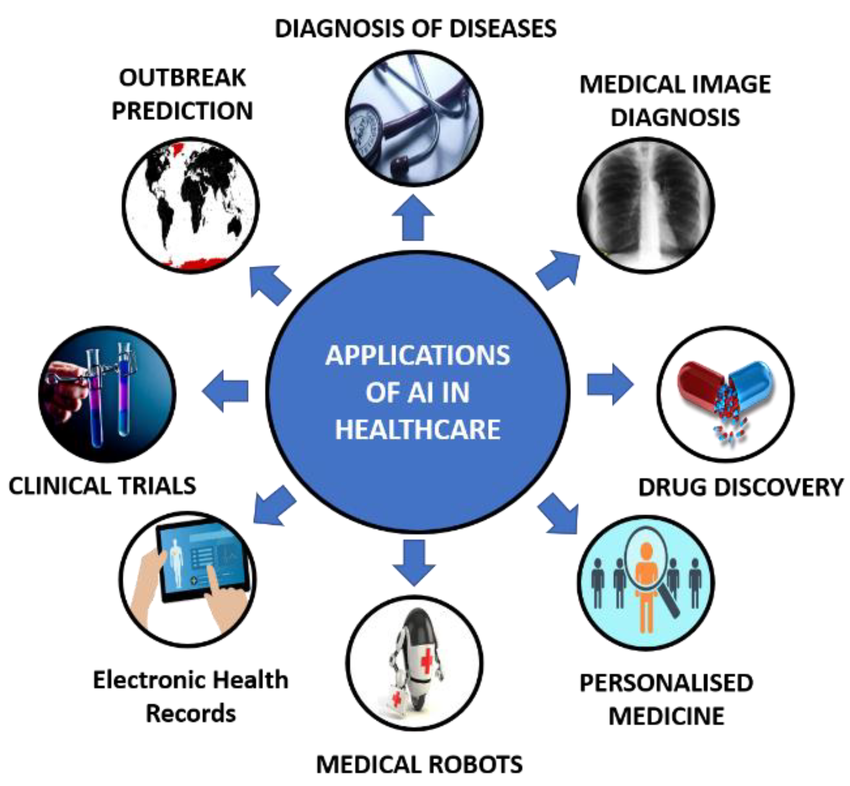

Healthcare: Saving Lives

AI systems now assist in reading medical images with accuracy that can match or exceed expert radiologists, supporting earlier cancer detection and more timely treatment (Bi et al., 2019). In intensive care units, predictive models that track vital signs can warn clinicians hours before a patient’s condition becomes critical (Eisemann et al., 2025).

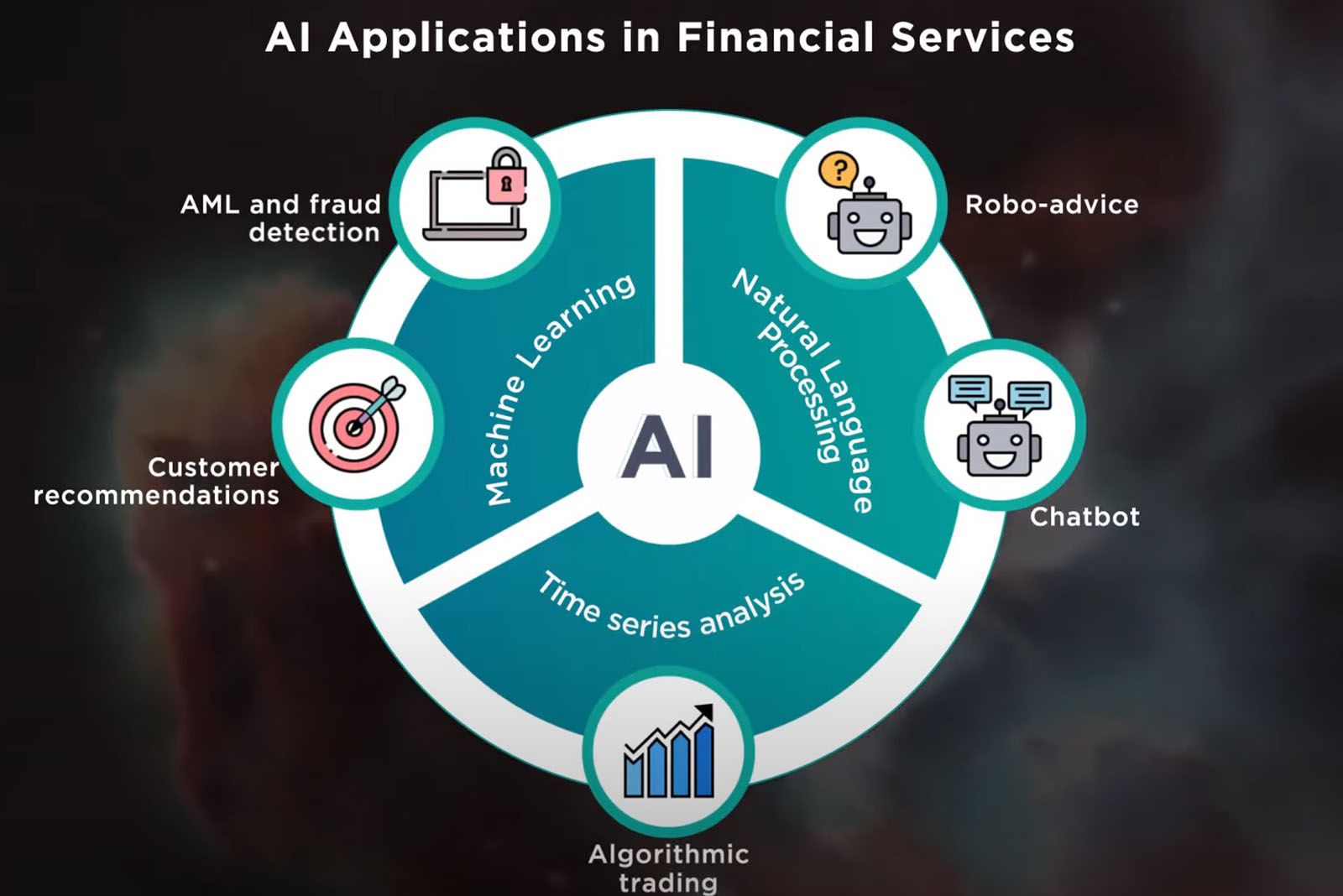

Finance: The Speed of Trust

Financial markets use AI systems that score thousands of transactions per second for fraud risk in real time (IBM, 2025). Large institutions report that these platforms screen billions of payments annually and help prevent significant amounts of fraud and money laundering (Finance Alliance, 2025).

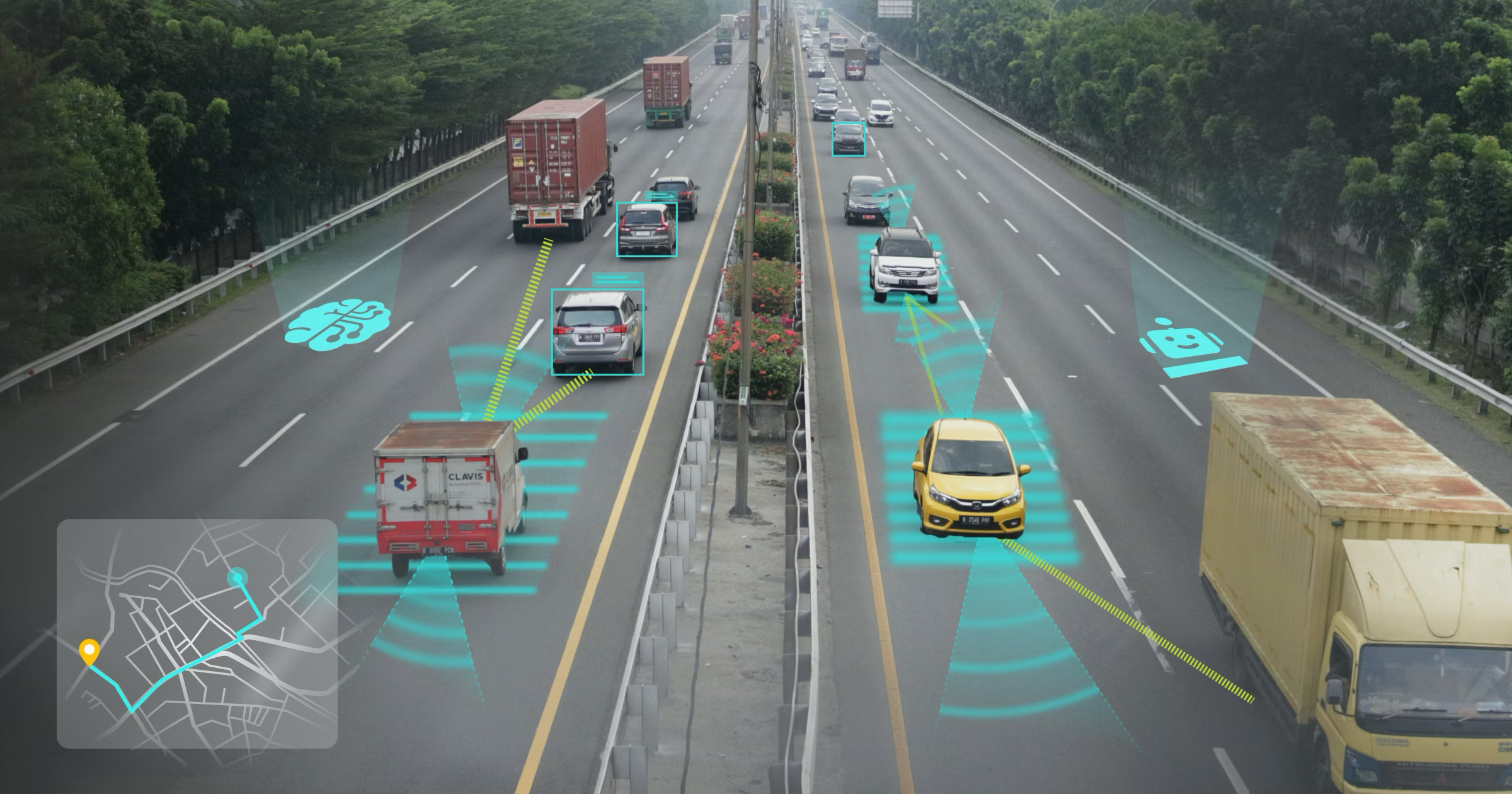

Transport: Moving Safely

Traffic safety research shows that driver-related factors such as distraction, speeding, and impairment contribute to the majority of road crashes (World Health Organization, 2019). AI-enabled driver-assistance and autonomous systems aim to reduce this risk by monitoring the environment continuously and reacting faster than human drivers (Khattak et al., 2021).

Artificial Intelligence already shapes many aspects of daily life. As systems grow more capable, a central question emerges:

How can we ensure AI remains safe, reliable, and aligned with human values?

AI Safety is the scientific and engineering discipline dedicated to answering that question. It focuses on designing, testing, and governing Governance: Creating laws, ethical standards, and oversight committees to ensure AI developers are held accountable. AI systems so they do not cause unintended or harmful outcomes.

To be considered "Safe," an AI system must satisfy three core principles:

Reliable

(Robustness)

It works safely, even in chaos or when facing unexpected data.

Transparent

(Interpretability)

We shouldn't blindly trust a "black box" Black Box AI: A system so complex that even its creators cannot explain exactly how it reached a specific decision or answer. Safety means building tools to let us see inside the AI's "brain" to understand its reasoning.

Helpful

(Alignment)

AI should do what we mean, not just what we say. It must be designed to respect human values and avoid finding dangerous loopholes to achieve its goals.

What Happens When We Get It Wrong?

From hidden biases to loss of control, the risks are real.

Explore the Perils of AI